Like many organisations out there, we have witnessed a surge in automation over the last few years with a multitude of tools, frameworks, languages, etc. as we drive towards zero-click deployments for the plethora of squads/tribes within the business. During this time, I’ve slowly but surely witnessed talk about testing and automation/checking become synonymous (primarily from non-testers) as test-personnel within the organisation spend more and more time focused on writing/refining automated checks within their suites.

Some years ago when agile started becoming mainstream, there were proponents who were extremely vocal and forceful about agile practice (I recall Michael Bolton referring to them as Agilistas), almost to the point where they seemed somewhat rabid. I was all for someone getting excited about something positive, but by the same token I firmly believed they had to CALM DOWN!

The reason I mention this is because I see some resemblance in the type of human behaviour surrounding automation nowadays. Particularly from non-testers, where similar frenzied (almost fanatical) endorsements of existing automation (irrespective of how good/bad/fast/slow/thoughtful/shallow) and calls for more, MoRe, MORE! automation is underpinned by wild gesticulation, loud voices and (in some cases) frothing mouths.

The concern I have of course is that amidst the frenzy about automation is that the distinction between testing and checking is misunderstood/forgotten/ignored, supplanted by the belief that everything valuable about testing is embedded within the automation artefacts that they lavish praise on.

I recently got a chance to present into our VP a while back and I wanted to have something up my sleeve that clearly illustrated that testing and checking were not the same thing, but also to place the importance one has on the other. I also wanted to show what would happen if you took testing out of the equation.

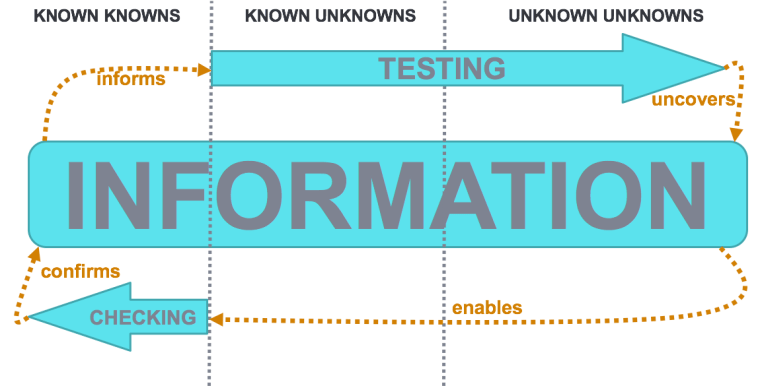

Dan Ashby’s recent blog rang a few bells for me when he cleverly related activities to information. This was enhanced further by John Stevenson, who also offered another unique model after MEWT 4 last year. After borrowing from both I adapted it to my own needs – something which I think nicely illustrates the synergy between testing and checking, while at the same time, showing that they fall on different parts of the “information spectrum”:

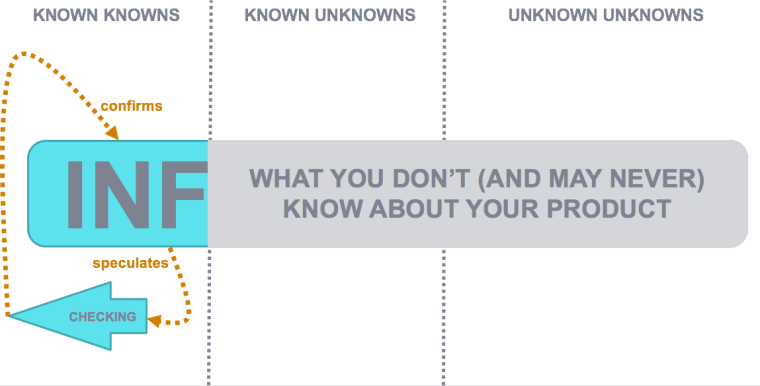

As you can see I’ve borrowed from the former US Secretary of Defence, Donald Rumsfeld too, and while I’m no real fan of his I do like the fact his somewhat famous epistemology (“there are known knowns, known unknowns and unknown unknowns”) applies so well to testing. One could say that the art of checking lies firmly in the known knowns domain, while testing lies in the known unknowns and unknown unknowns domains. More importantly, if you take testing out of this equation, here’s what I believe you’re left with:

If you’re not testing, you’re not uncovering any information, and in your not uncovering any information, you’re simply confirming nothing more than speculation about what your product may (or may not) do, and there’s an enormous amount of information that you don’t (and may never) know about your product.

Can we really afford to focus on checking without doing any testing whatsoever?

I don’t think so.

I really like this. For me, your “testing” arrow cutting across the “known unknowns” and the “unknown unknowns” is making a really nice, subtle distinction between testing in the sense of it being a noun, and testing in the sense of being a verb…

as in the testing verb being an activity (e.g. “we test” or “testing is a process”) and the testing noun being an instance or a thing (e.g. “the test uncovered some information” or “I thought of a good test”)

To put it another way, the ACTIVITY of testing (i.e. the verb) is our process for uncovering some of our unknown unknowns, transforming them into known unknowns when we discover them. And then RUNNING the test (i.e. the noun) is the instance of our investigating (or questioning) the known unknown, translating it into a known known (or information).

Awesome update to the model mate!!

Depicts the synergy between testing and checking really well. It also, importantly captures the fact that this is a continuous cycle. Any automated checks should be informed by the information uncovered by testing. Thanks for this!

Great post, love the models. I’d like to make a poster of your models and put them up at all the testers desks so the rest of the team (dev, prod, project) can see what why we do what we do.

awesome elaboration

Shared it internally and a colleague wrote back: //So much our daily struggle in the test world. Projects only wants to pay for the checking part, not the testing…this drawing makes so much sense that it might convince my project manager to test more..:-)//

Awesome article and together with the one from Dan really helps to put things in the right perspective. Whenever I get confronted with this dilemma, usually it’s with people that also believe that “anyone can test”… This model will really help avoid misunderstandings here. Thanks a ton!